New 250GB Plans LIVE now.

How to Sync Audio to Video Like a Pro Editor

Getting your audio and video to line up perfectly is one of those fundamental, make-or-break steps in post-production. It's often the invisible craft that separates a polished, professional piece from something that just feels... off. We'll get into the nitty-gritty of how to do it, but first, let's talk about why this is such a crucial skill in the first place, especially when working with dual-system sound.

Why Perfect Audio Sync Is Non-Negotiable

Before we jump into the timeline, it’s worth understanding the why. Nothing pulls an audience out of a story faster than dialogue that doesn't match the speaker's lips. It’s jarring. It shatters the illusion and instantly signals that something is wrong, making even the most compelling content feel amateur. This is why syncing audio and video isn't just a technical task; it's a core part of the storytelling craft.

The need for this skill almost always comes from a professional workflow called dual-system sound. On set, crews rarely rely on the tiny, often low-quality microphone built into the camera. Instead, they use high-end external audio recorders to capture crisp, clean, and dynamic sound. The upside is far superior audio quality. The downside? You end up with two separate sets of files—video from the camera and audio from the recorder—that have to be meticulously merged back together.

From Silent Films to Digital Timelines

This challenge is as old as sound in cinema itself. The whole game changed in the late 1920s with the arrival of "talkies." The Jazz Singer in 1927 is famous for being the first feature film to incorporate synchronized dialogue, and it demanded completely new workflows to lock sound and picture together. While our tools have evolved dramatically since then, the core problem remains the same.

Today, editors have a whole arsenal of methods to achieve that perfect sync. Depending on what was done on set and the tools at your disposal, one approach might work better than another. Understanding all three gives you the flexibility to handle anything a project throws at you.

Three Core Audio Syncing Methods at a Glance

Here’s a quick rundown of the main techniques you'll encounter. Each has its place, and knowing when to use which is key.

Sync Method | Best For | Required Tools | Skill Level |

|---|---|---|---|

Manual Waveform | Simple setups, interviews, or when no clapper was used. | Your NLE's timeline. | Beginner |

Clapperboard/Timecode | Professional productions, multi-cam shoots. | Clapperboard, timecode generator. | Beginner to Intermediate |

Automatic Software Sync | Most modern workflows for speed and efficiency. | Premiere Pro, Final Cut Pro, DaVinci Resolve. | Beginner |

Ultimately, you can get a perfect sync with any of these methods. The right one just depends on how the footage was shot.

The ultimate goal is to make the technology invisible. When audio and video are perfectly synced, the audience doesn't think about the process—they simply experience the story. That's the mark of a skilled editor.

Mastering these techniques is your foundation. As you get deeper into editing, you'll see how this initial sync accuracy is critical for everything that follows, especially in modern review and approval workflows. Tools like those from PlayPause rely on a solid sync to keep feedback precise and help you get to the finish line faster.

Mastering Manual Sync: The Editor's Essential Skill

Automated syncing is a game-changer, no doubt. But what happens when the software gets it wrong? Or when you’re dealing with noisy scratch audio that the algorithm can’t decipher? This is where knowing how to sync by hand becomes your most valuable skill.

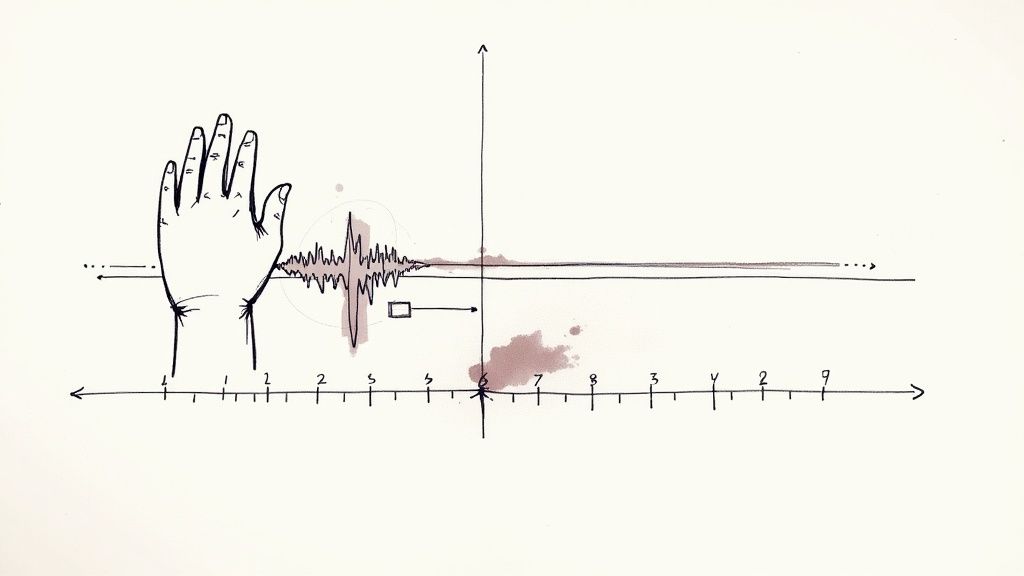

Mastering manual sync is more than just a backup plan; it's a fundamental part of an editor's toolkit. It gives you an intuitive feel for your media and absolute control when you need it most. The whole process boils down to finding one single, shared moment in time—a distinct, sharp sound that you can both see in the video and hear in the audio. That moment is your anchor.

The Classic Method: Using a Slate or Hand Clap

The clapperboard, or slate, is the most iconic tool for a reason. That sharp "clap" creates a massive, unmistakable spike in your audio waveform, while the visual of the sticks hitting gives you a precise frame to lock onto. It’s purpose-built for syncing.

Your mission is to hunt down the exact frame where the slate's clapper first makes contact. Simultaneously, you’ll find the very beginning of that loud clap in your external audio track. Line those two points up, and you’re synced. It's that simple and that precise.

No slate? No problem. A good, sharp hand clap in front of the camera before a take does the exact same job. It's a low-tech solution that provides a high-quality sync point, often called a "soft slate." This is standard practice on smaller documentary or corporate shoots where a full clapperboard might be too intrusive.

Pro Tip from the Edit Bay: When using a hand clap, tell the person on camera to start with their hands open and visible, then bring them together quickly. This creates a much clearer visual impact, making it far easier to pinpoint the exact frame of contact.

Syncing by Waveform: When There's No Slate in Sight

So, what do you do when there's no slate or clap? This happens all the time in run-and-gun interviews, event coverage, or B-roll shoots. This is where you learn to read the audio waveforms themselves, matching the patterns between your camera's scratch audio and your clean external audio.

You have to zoom way into your timeline, enough to see the detailed contours of the sound waves. You’re looking for unique audio signatures—and sharp consonant sounds in dialogue are your best friends here.

Hard sounds like “P,” “T,” or “K” create distinct, sharp peaks in the waveform that are far easier to align than soft, rolling vowel sounds. In an interview, the word “Production” or “Technique” at the start of a sentence can serve as a perfect, accidental sync marker.

Here’s a practical rundown of how it works:

Find a Target: Listen to the first few seconds of dialogue and pick a word with a hard, plosive consonant.

Locate the Peaks: Find the spike for that sound on both your camera's scratch audio and your external audio. The camera’s waveform will be smaller and noisier, but the basic shape should be a match.

Align and Nudge: Drag your external audio clip until the two peaks are roughly aligned. Then, zoom in and nudge the clip frame-by-frame until they are perfectly stacked.

Check for Phase: Play both tracks together. If they're perfectly synced, the sound will be full and centered. If you hear a weird echo or a "flanging" sound, you're likely off by a single frame. Nudge it again until that echo vanishes.

This skill is absolutely invaluable. Picture syncing a multi-camera musical performance where the drummer gives a single snare hit before the song starts. That one hit becomes your universal sync point, allowing you to align every camera and audio source with confidence. Manual syncing gives you the precision to tackle these complex jobs like a pro.

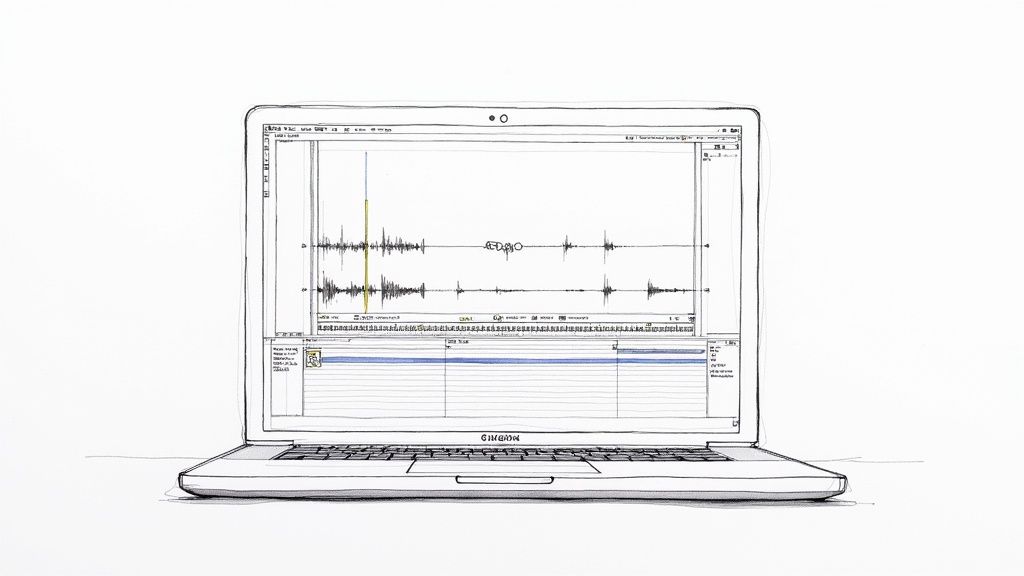

Letting Your NLE Do the Heavy Lifting: Automated Sync Tools

While lining up waveforms by hand is a fundamental skill every editor should have, the reality of modern post-production is that time is money. This is where your Non-Linear Editor (NLE) becomes your best assistant.

Modern editing software has incredibly smart, powerful tools built right in that can handle the bulk of your sync work in seconds. These features analyze and match audio waveforms or read timecode, freeing you up to focus on what really matters: telling a great story. Learning to use these functions is standard practice for a reason—they're fast, surprisingly accurate, and can process dozens of clips at once.

The Magic Behind Waveform Sync

So, how does it work? The software intelligently "listens" to the low-quality scratch audio recorded by your camera and compares it to the high-quality external audio from your dedicated recorder. It hunts for matching patterns—the peaks and valleys in the soundwaves—and then aligns the clips with near-perfect precision on your timeline.

This is a monumental time-saver. You're essentially asking the computer to do the same meticulous work you would do by hand, but at a speed no human can possibly match. What used to take hours of nudging clips back and forth now takes just a few clicks.

How to Sync in Adobe Premiere Pro

Premiere Pro has one of the most straightforward and reliable sync tools out there, making it a favorite for many editors.

First, drop your video clip (with its scratch audio) onto a track in the timeline. Place the separate, high-quality audio clip on a track directly below it. You don't need to be perfect, but getting them roughly aligned can sometimes help the software.

Next, select both clips. You can do this by holding the Shift key and clicking each one, or just by dragging a selection box over them. With both highlighted, right-click and choose Synchronize from the menu.

A dialog box will pop up with a few choices:

Clip Start / Clip End: These are rarely useful for dual-system sound.

Timecode: The one to use if your gear was synced with professional timecode generators on set.

Audio: This is the one you’ll use 95% of the time. It tells Premiere to match the soundwaves.

Just select Audio and click OK. Premiere will analyze the tracks, and like magic, your external audio will snap right into place, perfectly synced with your video. Now you can mute, disable, or delete the camera's original audio track, leaving just the pristine sound.

How to Sync in DaVinci Resolve

Using the auto-sync tools in powerful non-linear editing software like DaVinci Resolve can massively streamline your workflow. Resolve’s approach is a bit different but just as effective, focusing on media management before you even start cutting.

Instead of syncing on the timeline, you’ll usually do it right in the Media Pool. This is a fantastic way to keep your project tidy from the get-go.

Here’s the typical workflow:

Select Your Clips: In the Media Pool, find the video clip and its matching audio file. Select them both.

Find the Sync Menu: Right-click the selected clips and go to Auto Sync Audio.

Pick Your Method: You'll see options to sync based on Timecode or Waveform. If you didn't have a timecode box on set, choose Based on Waveform.

Resolve will process the files and link them. The best part? It doesn't create new, clunky media files. It simply tells the video clip to use the new, high-quality audio by default. Now, every time you drag that video clip to the timeline, it will automatically bring the synced sound with it.

A Quick Tip on Organization: Syncing in Resolve's Media Pool builds great habits. By prepping your clips before they hit the timeline, you ensure that every instance of that shot already has the correct audio attached. This is a lifesaver on bigger, more complex projects.

How to Sync in Final Cut Pro

Final Cut Pro also has a robust and speedy way to handle syncing. It works by creating a new, "synchronized clip" that neatly bundles your video and high-quality audio together, keeping your browser and timeline clean.

The process is incredibly simple. In the browser (Final Cut Pro’s media pool), select the video and audio clips you want to sync up. You can hold the Command key while clicking to select multiple files.

With your clips selected, just right-click and choose Synchronize Clips. A new window will pop up where you can name your new clip. The crucial step here is to make sure the box for Use audio for synchronization is checked. This is what tells Final Cut to analyze the waveforms.

Click OK, and a brand new clip will appear in your browser. This is your synchronized clip. Drag it into the timeline, and you're ready to edit with perfect sound.

What to Do When Audio Sync Goes Wrong

Sooner or later, you're going to hit a sync problem that automated tools can't solve and manual methods don't immediately fix. It happens to everyone. This is where your technical know-how really comes into play, and learning to diagnose these issues is what separates the pros from the amateurs.

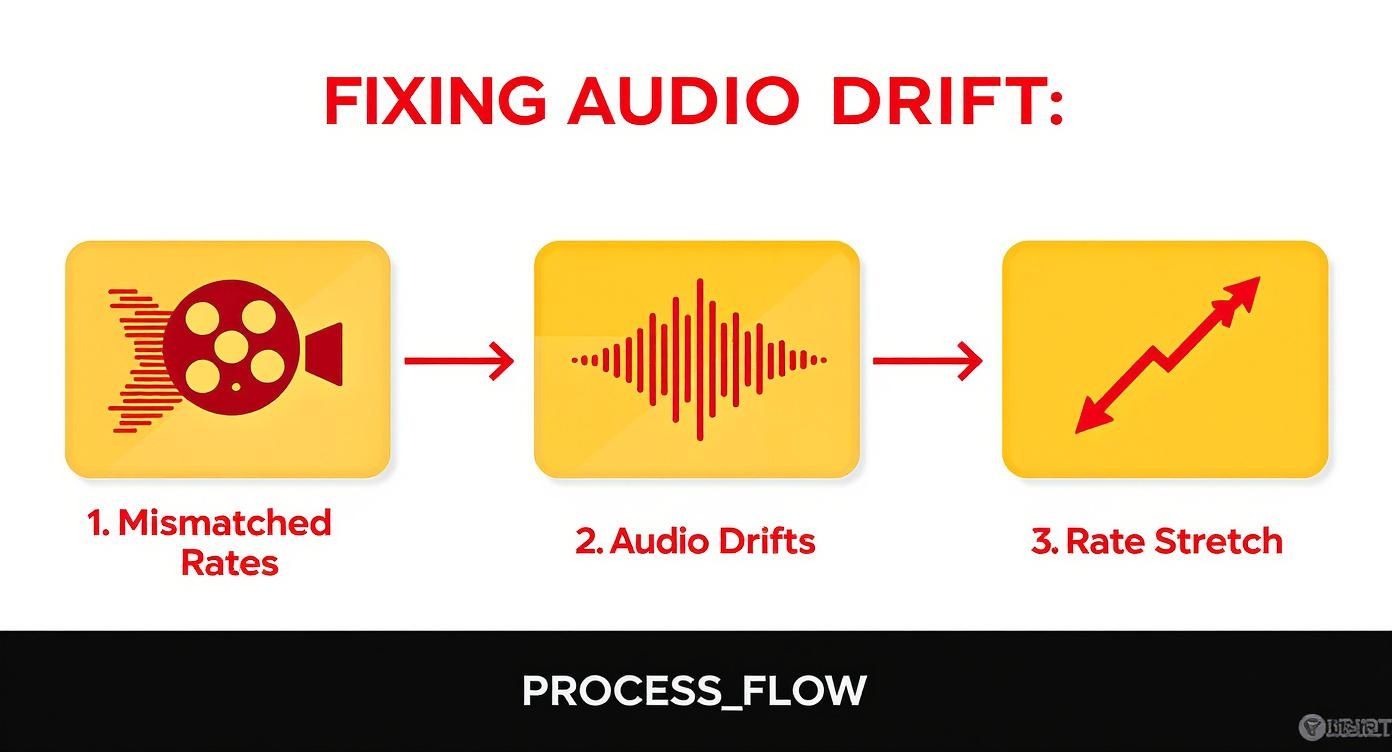

The most common and frustrating problem by far is audio drift. You line everything up perfectly at the start of a long take, but by the end, the audio is mysteriously out of sync. The dialogue is just slightly off from the speaker's lips, and the whole thing feels... wrong. This isn't just bad luck; it's a math problem.

Drift almost always boils down to one thing: your camera and external audio recorder weren't speaking the same language. Specifically, they were set to slightly different frame rates or sample rates.

Tackling Audio Drift Head-On

Let's imagine a real-world scenario. Your camera is shooting at 23.976 frames per second, a standard for cinematic looks. But your sound mixer, in a rush, left the audio recorder set for a true 24 fps project. For a few seconds, you'd never notice. But stretch that out over a 10-minute interview, and that tiny, fractional difference builds up, causing the audio to slowly pull away from the video.

The exact same thing happens with mismatched audio sample rates. If your video project is set to the professional standard of 48 kHz but the audio was recorded at 44.1 kHz (a holdover from the music CD era), one file is simply playing back faster than the other.

So how do you fix this mess? You have to "stretch" or "shrink" the audio to match the video's exact length, but without making your talent sound like a chipmunk or James Earl Jones. Most editing software has a tool built for this, like the Rate Stretch tool in Premiere Pro or similar functions in Final Cut Pro and DaVinci Resolve.

Here’s my go-to process:

Nail the beginning: First, get the very start of the clips perfectly synced. Use the clapper slate or find a sharp, percussive sound (like a "p" or "t" in dialogue) and line it up.

Jump to the end: Now, go to the last frame of the clip and see how far off the audio has drifted.

Stretch to fit: Grab your NLE's rate stretch tool and drag the end of the audio clip, either stretching it or compressing it until it snaps perfectly to the end of the video.

This simple action forces the NLE to recalculate the audio's playback speed to match the video's duration precisely, killing the drift.

A tiny sync discrepancy can completely ruin the viewing experience. Even a few frames off can make a production feel cheap or unprofessional. Getting this right isn't just a technical step; it's essential for maintaining quality.

When Your Automated Sync Throws in the Towel

Another classic headache is when you drop your clips into the timeline, hit the "synchronize" button, and the software just says... no. When an NLE tells you it can't find a match, it's usually because the scratch audio from the camera is garbage.

Here are the usual suspects:

The environment was too loud: The camera was probably too far from the talent, and its cheap built-in mic picked up more air conditioning hum and background chatter than actual dialogue. The software has nothing to compare.

The audio is too quiet: If the talent was speaking softly or there are no sharp, clear sounds, the waveform will look more like a gentle hill than a spiky mountain range. The algorithm has nothing to grab onto.

Someone forgot to turn the camera mic on: It happens to the best of us. If the camera's internal mic gain was at zero or turned off, you have no reference audio to sync with. Simple as that.

When automated tools fail, you have no choice but to roll up your sleeves and go back to manual syncing. Look for a visual cue—a handclap, a door slam, even a person's head nod—and align it with the corresponding sound on your clean audio track. It’s tedious work, but sometimes it’s the only way.

The truth is, viewers are incredibly sensitive to sync issues. The European Broadcasting Union has standards defining a very tight window of acceptability: audio can lead the video by no more than 40 milliseconds or lag behind it by 60 milliseconds before the average person notices. If you want to dive deeper, you can find these technical specs in their published broadcaster guidelines.

Building a Flawless Sync Workflow from the Start

The secret to perfect audio sync? It starts long before you ever drag a clip into your timeline. The best editors know that a bulletproof workflow begins on set, not in the edit bay. It’s all about prevention—a few deliberate steps upfront can save you from a world of technical headaches later.

This means switching from a reactive "I'll fix it in post" mindset to one of proactive planning. It really boils down to clear communication between the production and post-production teams, even if you’re a one-person crew wearing all those hats. Getting your technical standards locked in before the first take is the single most important habit you can build.

Aligning Your Technical Specs Before You Roll

Most sync nightmares, especially that dreaded audio drift on long takes, come from one simple mistake: mismatched settings between devices. Your camera and your external audio recorder must be set to the exact same frame rate and audio sample rate. There’s zero wiggle room here.

For example, if your camera is shooting at 23.976 frames per second (fps) for that cinematic look, your audio recorder needs to be set to a matching project frame rate of 23.976 fps. A classic mistake is leaving the audio recorder at its default, often 30 fps. The files might seem fine for a 30-second clip, but that tiny difference will slowly pull the audio out of sync over a 10-minute interview.

The same principle applies to your audio sample rate. The industry standard for video is 48 kHz. If your audio is accidentally recorded at 44.1 kHz (the standard for music), you’ve just baked a sync problem right into your source files.

Frame Rate Consistency: Double-check that every camera and audio recorder on set is locked to the identical frame rate.

Sample Rate Standard: Verify all audio devices are recording at 48 kHz and, for best results, a bit depth of 24-bit.

Pre-Shoot Checklist: Make these checks a non-negotiable part of your setup routine, right alongside checking batteries and formatting cards.

This infographic lays out exactly how those initial mismatched settings create drift, forcing a fix in post that could have been avoided altogether.

As you can see, what starts as a simple oversight on set turns into a problem that requires manually stretching the audio in your NLE to make it fit.

The Power of Timecode

If you’re running more than one camera, using a timecode generator is an absolute game-changer. These little boxes feed a continuous, frame-accurate time signal to all your cameras and audio recorders, essentially giving every file the exact same clock.

Think of it like this: timecode gives every single frame of video and audio a unique, matching address. When you get into your editing software, you're not trying to visually match waveforms or clapper boards. You’re just telling the software, "Match these timecode addresses," and bam—perfect, instant sync. It’s easily the most reliable method out there.

Timecode isn't a luxury; it's a professional necessity on complex shoots. The initial cost and setup time are minuscule compared to the hours you'll save trying to manually sync a dozen different sources in post.

Embracing Modern Camera-to-Cloud Workflows

Today’s productions are increasingly using Camera-to-Cloud (C2C) services. These platforms are incredible because they automatically upload proxy files from the camera and audio recorder directly to the cloud while you are still recording.

This workflow is a huge win for sync. It preserves all the crucial metadata—timecode, file names, everything—from the moment of capture, eliminating the risk of human error during manual file transfers and backups. For teams wanting to learn more about optimizing their entire process, you can find great articles on the PlayPause blog.

For a deeper dive into all the different methods available, this guide on how to achieve perfect audio-video sync is a fantastic resource. Adopting these modern workflows can turn a tedious chore into a seamless, automated part of your routine.

A Few Common Questions About Audio Sync

Even when you've got the basics down, syncing audio can throw a few curveballs your way. Let's tackle some of the common hurdles and questions that pop up for editors in the trenches.

Can I Sync Audio If My Camera Didn't Record Sound?

Yes, absolutely. But you're going to have to do it the old-fashioned way—by eye. Without any audio from the camera, there's no waveform for software to analyze, so automatic syncing is off the table.

This is precisely where a good old clapperboard saves the day. Your only real option is to sync manually. Scrub through your timeline to find the exact frame where the slate claps shut, then line it up with that sharp, unmistakable peak in your external audio file. If you didn't use a slate, look for another distinct visual and audible cue, like a hand clap or a door slamming shut.

Why Does My Synced Audio Have a Weird Echo?

That slight echo or "flanging" effect is the tell-tale sign that your sync is off by just a frame or two. What you're hearing is the audio from your camera and your external recorder playing almost at the same time, but not quite, which creates phasing issues.

To fix this, you need to get surgical. Zoom way into your timeline, right down to the individual frame level. Mute one of the audio tracks, then nudge the other one forward or backward, one frame at a time. Unmute and listen after each adjustment. That annoying echo will completely disappear the second they are perfectly aligned.

When you nail the sync, the two waveforms don't just stop echoing; they actually reinforce each other. The audio suddenly sounds fuller and more centered. That's how you know it's frame-perfect.

What's the Difference Between Sample Rate and Frame Rate?

Both are all about timing, but they measure two completely different things. Confusing them is a classic recipe for sync issues, especially the dreaded audio drift on longer clips.

Frame Rate (fps): This is all about the video. It tells you how many still images, or frames, are shown every second. You'll typically see 24 fps for a cinematic look, 29.97 fps for broadcast TV, or 60 fps for silky-smooth slow motion.

Sample Rate (kHz): This one is pure audio. It measures how many times per second the sound wave is "sampled" to create the digital recording. For any professional video work, the standard is 48 kHz.

If your frame rates are mismatched, the video will play at the wrong speed. But if your sample rates are mismatched, the audio will slowly but surely drift out of sync over the course of the clip. Getting into complex workflows? Sometimes a little expert advice can prevent a major headache. If you have specific setup questions, feel free to reach out to our team for some guidance.

Is Timecode Really Necessary for Every Project?

Honestly, no, not for every project. But for certain types of shoots, it's an absolute game-changer and a non-negotiable part of a professional workflow. If you're just shooting a simple, single-camera interview, a quick hand clap or waveform sync is perfectly fine.

However, timecode becomes your best friend on:

Multi-camera shoots: Manually syncing three, four, or more cameras from a live event is the stuff of nightmares. With timecode, it's a one-click job.

Long-form recordings: If you're rolling for more than 15-20 minutes at a time—think documentaries or conference recordings—timecode is your best insurance policy against audio drift.

Complex workflows: When you've got a dedicated sound mixer on set with multiple recorders, timecode acts as the universal clock that keeps every single file perfectly locked together from the start.