New 250GB Plans LIVE now.

Synchronize Sound and Video The Pro Editor's Guide

Synchronizing sound and video is all about getting your separately recorded audio and video tracks to play together perfectly in your editing software. You need a common reference point—like the classic clapperboard, a simple handclap, or even embedded timecode—to make it happen. This step is absolutely critical because recording audio on a dedicated device almost always gives you far better quality than any in-camera microphone ever could.

Nailing this alignment is the bedrock of professional post-production.

Why Flawless Audio Sync Is a Game Changer

Have you ever watched a video where the dialogue is just a fraction of a second off from the speaker's lips? It’s distracting, right? That tiny mismatch is enough to pull you right out of the moment and make even the most beautifully shot footage feel cheap and unprofessional. Our brains are hardwired to expect sight and sound to match up, and when they don't, the quality of the whole production takes a nosedive.

This is exactly why recording audio and video separately is the professional standard for everything from films and interviews to music videos. An on-camera mic is a compromise; it picks up every unwanted background noise and just can't deliver the rich, clean sound you need. By capturing pristine audio on a separate recorder, you gain massive control over your final mix, but it introduces the essential post-production task of syncing it all up.

The Foundation of Professional Post-Production

Before we jump into the "how-to," it helps to get a handle on the main strategies editors use to get everything in lock-step. The right method depends entirely on your project, whether it's a simple one-camera interview or a complex multi-camera shoot.

Manual Syncing: This is the old-school, hands-on technique. You find a clear audio and visual cue—like the snap of a clapperboard—and manually line up the audio waveform spike with that exact visual moment on your timeline.

Timecode Syncing: For more complex productions, this is the gold standard. Dedicated hardware generates a continuous time signal that gets embedded into both the video and audio files. This lets your software align everything with perfect, frame-accurate precision.

Automated Syncing: Most modern editing software has gotten incredibly smart. These tools can analyze the low-quality "scratch" audio from your camera and compare it to your high-quality external audio, automatically finding a match and syncing the clips for you. It's a huge time-saver.

The challenge of synchronizing sound and video isn't new; it has been at the heart of filmmaking for nearly a century. This process completely changed entertainment back in the late 1920s. By 1930, over 90% of major American films were released with synchronized sound, cementing its role as a core part of the cinematic experience. It's amazing to see how far the technology has come.

Choosing Your Audio Sync Method

Knowing these different approaches gives you a solid game plan. Let's take a quick look at how these methods stack up, so you can choose the best one for your next project.

This table gives a quick look at the primary methods for synchronizing audio and video, outlining their best use cases and relative complexity.

Syncing Method | Best For | Key Tool | Complexity |

|---|---|---|---|

Manual Sync | Simple setups, one or two cameras | Clapperboard, handclap, visual cues | Low to Medium |

Timecode Sync | Multi-camera shoots, live events | Timecode generator (e.g., Tentacle Sync) | High |

Automated Sync | Projects with clean scratch audio | NLE software (Premiere Pro, Resolve) | Low |

This guide will walk you through each one, giving you the practical know-how to tackle any syncing challenge that comes your way. And when it comes time to share your work, our team at https://playpause.io/ has a suite of collaborative tools built for seamless video review and approval.

Getting Your Hands Dirty: Manual Syncing by Hand and Eye

Long before fancy software and timecode generators, editors relied on their eyes and ears to lock picture and sound together. This is a fundamental skill that every editor should have in their back pocket. It's not just about knowing the "old way" of doing things; it's about training your intuition and understanding the very essence of how sound and video relate to one another.

The classic tool for this job, of course, is the clapperboard, or slate. Its job is brilliantly simple: create one sharp moment in time that's both seen and heard. When that clapstick snaps shut, you get a crystal-clear visual marker in your video and a sharp, unmistakable spike in your audio waveform.

Here’s what a standard slate looks like, complete with the scene, shot, and take info that keeps everything organized back in the edit bay.

That visual snap and the corresponding audio peak are the two puzzle pieces you need to fit together on your timeline.

Lining Up the Slate in Your NLE

The process itself is straightforward, but it demands a bit of precision. In your editor of choice—whether it's Premiere Pro or DaVinci Resolve—drop your video clip on one track and the separate, high-quality audio on a track just below it.

Now, scrub through the video frame by frame. You're looking for that exact frame where the two halves of the clapperboard make contact. That's your visual sync point. Next, glance down at the waveform for your external audio. You should see a tall, pointy peak that lines up with the sound of the clap.

All you have to do is drag the audio clip until that waveform peak sits directly under the visual clap. Zoom way in on your timeline to get it perfect. You want it to be frame-accurate.

This hands-on approach has deep roots. Way before digital timelines, the arrival of magnetic tape recording in the 1940s and 50s was a massive shift. The Nagra III recorder, introduced in 1958, became the gold standard for its rock-solid sync system. By the early 1960s, a staggering 90% of major films were using it, cementing the dual-system sound workflow we still use today. You can dive into a brief history of audio-video technology to see just how these foundational tools came to be.

What if You Don't Have a Slate?

No slate? No problem. The principle is exactly the same—you just have to create your own audio-visual cue.

The easiest way is a good, loud handclap right in front of the camera. The motion of your hands coming together gives you the visual marker, and the sharp sound creates that all-important spike in the audio waveform. This trick is an absolute lifesaver on run-and-gun shoots, documentaries, or any time a formal slate just isn't practical.

Here are a few other tricks I've used over the years:

A Sharp Word: In a pinch, even a distinct consonant can work. If your subject says a word with a hard 'P' or 'B' sound, like "point" or "begin," you can often spot the corresponding "plosive" spike in the waveform and match it to their lip movement. It takes some finesse, but it's a surprisingly reliable way to sync audio and video when you have nothing else.

A Quick Tap: Tapping a pen on a table, clicking a camera lens cap shut, or even dropping a set of keys can work. Anything that creates a fast, identifiable sound alongside a clear visual action can be your sync point.

The real key here is consistency. Whatever method you choose, do it at the start of every single take. It will make the process in the edit bay a thousand times faster and save you from a world of frustration. Mastering these manual techniques means you'll never be stuck, even when gear fails or a shoot is rushed. You'll have a rock-solid understanding of how picture and sound truly lock together.

Using Timecode for Bulletproof Synchronization

When you graduate from a simple one-camera setup to something more complex—like a multi-cam interview, a documentary, or a live event—manually syncing your clips becomes a special kind of post-production hell. This is precisely when you call in the big guns: timecode.

Think of timecode as a digital stamp. It permanently burns a unique address—in hours, minutes, seconds, and frames—onto every single frame of video and every sample of audio. Instead of hunting for a clap on a slate, you're giving your editing software an exact set of coordinates to work with.

When you tell your NLE to sync using timecode, it's not guessing. It’s simply reading those addresses and snapping everything into perfect alignment. It's the closest thing we have to a "set it and forget it" solution, turning what could be hours of tedious work into a single, satisfying click.

The infographic below shows the manual process that timecode completely automates for bigger, more demanding projects.

You can see the classic elements—the slate, the clap, the waveform—all the little cues an editor has to painstakingly line up by hand. Timecode makes all of that obsolete.

How Timecode Works on Set

So how does this actually work in the real world? The magic comes from small, dedicated timecode generator boxes. These little devices are the heart of the whole operation, making sure every piece of equipment on your set is marching to the exact same beat.

It all starts with a process called jam syncing. At the beginning of the shoot day, you gather all your timecode boxes (from brands like Tentacle Sync or Ambient Lockit) and connect them to a single "master" clock. They all sync up to its time, and from that moment on, they run independently, held in lock-step by hyper-accurate internal crystals.

The workflow is pretty straightforward:

You attach one box to each camera, usually feeding the timecode signal into an audio input.

You connect another box to your external sound recorder.

That’s it. Now every device is recording the exact same continuous timecode, creating an unbreakable, universal reference for the entire shoot.

Locking different devices together isn't a new concept, but its evolution has been massive. The shift to digital in the 1980s was a huge leap, especially with gear like Sony's PCM-F1 Digital Recording Processor. It’s wild to think that by the mid-1980s, this kind of tech was already used on over 70% of major productions, really setting the stage for the digital sync methods we depend on today. If you're a history buff, you can read more about the dawn of digital and film sound sync to see just how far we've come.

When Is Timecode an Absolute Must?

Look, for a simple talking-head video, a timecode setup is probably overkill. But for certain jobs, it’s not just a nice-to-have; it's completely non-negotiable. Investing in this workflow isn't about convenience—it's about protecting your project from expensive mistakes and frustrating delays in the edit bay.

Here are a few scenarios where timecode is your best friend:

Multi-Camera Interviews: Every angle—the wide shot, the two close-ups—will line up perfectly without a single frame of guesswork.

Live Events and Concerts: You're often dealing with multiple cameras and a direct audio feed from the soundboard, all recording for hours. Timecode is the only way to ensure it all syncs flawlessly.

Documentary and Reality TV: In these run-and-gun environments, cameras and sound are constantly starting and stopping. A continuous timecode clock is the only reliable anchor.

Complex Narrative Shoots: Think of any scene with multiple cameras where slating every single take just isn't practical.

At the end of the day, using timecode is an investment in your sanity and your schedule. For any project with more than one camera and a separate audio source, it provides a level of bulletproof synchronization that manual and even waveform-based methods simply can't guarantee, especially over long takes where audio drift becomes a real problem.

Automated Syncing Tools and AI Workflows

While manually syncing clips is a core skill every editor should have, it's not always the most practical approach. When you're staring down a timeline loaded with footage from a multi-camera shoot, it's time to let your software do the heavy lifting.

Modern NLEs like DaVinci Resolve and Premiere Pro have some seriously powerful built-in tools for automatically syncing your sound and video. These features are a godsend, but they aren’t magic. They work by using waveform analysis to match the audio patterns between your clips.

Your NLE essentially "listens" to the low-quality scratch audio from your camera's microphone and compares it to the high-quality audio from your dedicated recorder. It scans both sound waves, looking for identical peaks and valleys, and then snaps them into perfect alignment. When it works, it feels like cheating—dozens of clips locked in sync with just a few clicks.

Getting the Most from Built-In Sync Tools

For these automated tools to succeed, they need one thing above all else: a clean reference. The quality of your camera's scratch audio is everything. If it's too quiet, muffled by wind noise, or—even worse—wasn't recorded at all, the software has nothing to latch onto, and the sync will fail.

Before you ever roll camera, do a quick sound check to make sure the internal mic is on and the levels are decent. It doesn't have to be pretty, but it absolutely has to be audible. This simple habit can mean the difference between a one-click sync and hours of tedious manual labor.

For more deep dives into production and editing workflows, check out our other guides on the PlayPause blog.

The point of automation isn't to make an editor's skills obsolete. It's to handle the repetitive, non-creative chores so you can get to the good stuff—the storytelling, the pacing, and the creative choices—that much faster.

When to Use a Dedicated Syncing Plugin

The native tools in Resolve and Premiere are fantastic for most projects. But they have their limits. When you're dealing with a truly massive project—think hundreds of clips from multiple cameras shot over several days—your NLE can start to choke. It might slow to a crawl, freeze up, or just give up on finding matches.

This is where a dedicated, third-party plugin like Red Giant's PluralEyes becomes a lifesaver.

PluralEyes is built for one job and one job only: syncing audio and video. Because it's a standalone application, it's highly optimized and can power through enormous, complex projects that would bring a standard NLE to its knees. It analyzes everything, intelligently corrects for audio drift on longer takes, and then gives you an XML file to import right back into your timeline with everything perfectly aligned.

The interface is all business, giving you a clear visual overview of all your media files and how they relate to each other.

This kind of layout makes it incredibly easy to spot if a camera file is missing its corresponding audio before you even start the process.

Comparing NLE Sync Tools vs Third-Party Plugins

So, when should you stick with your NLE's built-in tools, and when is it time to invest in a dedicated plugin? While the lines can sometimes blur, understanding their core strengths will help you choose the right tool for the job.

Here’s a quick breakdown of how they stack up.

Feature | Premiere Pro / DaVinci Resolve | PluralEyes | Best Use Case |

|---|---|---|---|

Speed & Convenience | Very fast for small to medium projects. | Requires an extra export/import step. | Native NLE tools are perfect for most daily editing tasks. |

Handling Large Batches | Can become slow or unstable with hundreds of clips. | Highly optimized for massive amounts of footage. | PluralEyes shines on documentaries or event coverage. |

Drift Correction | Basic capabilities. | Advanced algorithms to fix audio drift automatically. | Essential for long-form interviews or concert recordings. |

Cost | Included with your NLE subscription. | Requires a separate purchase or subscription. | A worthwhile investment for professionals on complex projects. |

Ultimately, whether you rely on a native NLE feature or a specialized plugin, the strategy is the same. Start with clean scratch audio, understand the limits of your tools, and know when it's time to call in the big guns. Get that right, and you can make audio sync a fast and painless part of your workflow.

How to Fix Common Audio Sync Problems

Even with the best planning, you're going to hit a point where your audio and video just won't play nice. It’s one of the most persistent headaches in post-production, but almost every sync problem has a logical cause and, thankfully, a pretty straightforward fix. The trick is to troubleshoot calmly instead of immediately pulling your hair out.

The most infamous issue is audio drift. This is that maddening situation where your sound and picture start off perfectly aligned but slowly, almost sneakily, fall apart over a long clip. At the 10-second mark, everything's great. By the 20-minute mark, the lip-sync is a mess. This isn't just bad luck; it’s a technical problem we can solve.

Diagnosing and Fixing Audio Drift

Nine times out of ten, audio drift is caused by the internal clocks in your camera and audio recorder running at fractionally different speeds. A minuscule difference of 0.1%, which you’d never notice on a short take, will blossom into a major sync disaster during a long interview or live event recording.

The other major culprit is a mismatch in audio sample rates. If your camera records its scratch audio at 48 kHz (the professional video standard) but your external recorder was accidentally set to 44.1 kHz (the standard for music CDs), you're in for a world of hurt. The files are capturing sound at fundamentally different resolutions, which forces one to play back faster or slower than the other, creating that dreaded drift.

Fortunately, most modern NLEs have tools built for exactly this scenario.

In Premiere Pro, you can grab the Rate Stretch Tool (just hit 'R' on your keyboard) to gently speed up or slow down the audio clip until it fits.

DaVinci Resolve has a feature called "Elastic Wave" in its Fairlight audio panel, which lets you re-time audio without affecting the pitch.

To use these tools, just jump to the very end of your clip and find one last, clear sync point—a hard "t" or "k" sound is perfect. Then, just stretch the audio waveform until it snaps back into place. The adjustment is usually so small that no one will ever hear it.

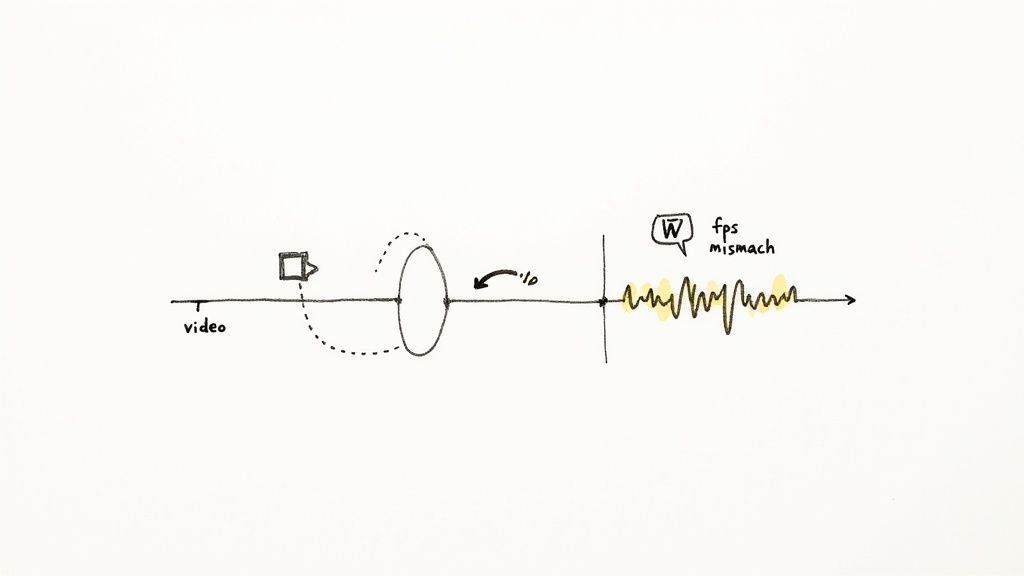

The Frame Rate Mismatch Trap

Another classic sync problem comes from mismatched frame rates. The most common offender here is the tiny but critical difference between 23.976 fps and a true 24 fps. Many cameras shoot at 23.976 (often called 23.98) for broadcast compatibility, while some cinema cameras or external gear might be set to a straight 24.

When you drop both into the same timeline, your editing software has to either drop or duplicate frames to make them conform, which can introduce jerky playback and, you guessed it, sync issues. The fix starts before you even think about editing. Always check your source files and make sure they are conformed to a consistent frame rate for your entire project. This gets every clip playing by the same rules.

The surest way to synchronize sound and video and avoid these headaches is to get everyone on the same page before the shoot even begins. Make sure all cameras are set to the same frame rate and all audio recorders are locked into the same sample rate, usually 24-bit, 48 kHz. A few minutes of prep will save you hours of post-production misery.

When Automated Sync Fails: A Quick Checklist

When your NLE’s automatic sync feature gives you an error and refuses to cooperate, don’t immediately resort to manual syncing. It's often a simple fix. Run through this quick diagnostic checklist first.

Is the Scratch Audio Any Good? Pop open the camera clip and actually listen to the on-camera audio. If it’s dead silent, completely blown out with noise, or so muffled you can't make anything out, the software has no waveform to analyze.

Are the Clips a Similar Length? If the sound recordist hit record a full minute before the camera started rolling, the software might just give up trying to find a match. Trim the dead air off the front of the audio clip and try again.

Are There Competing Sounds? If you have multiple clips with very similar dialogue—like three takes of the same line back-to-back—the sync tool can get confused. Isolate the clips you want to sync and try them one at a time.

Is It Just a Glitch? Hey, it happens. Sometimes the easiest solution works. Try restarting your editing software or clearing the media cache before you attempt the sync again.

Final Checks and Exporting Best Practices

So, you've put in the time and meticulously synced all your audio and video. It feels like the job is done, but don't hit that export button just yet. A small oversight in these final stages can easily undo all your hard work, forcing you back into the edit bay. Creating a solid routine for your final checks and exports is the last, crucial step to guaranteeing a perfectly synchronized delivery.

Before you export, give your timeline one last comprehensive review. It's not enough to just watch it through from the start. I always recommend spot-checking different sections. Jump to the beginning, then a random point in the middle, and finally check near the very end. This quick three-point check is surprisingly effective at catching subtle audio drift that might have snuck in over the duration of a long clip.

The Dangers of Variable Frame Rates

One of the biggest culprits for sync issues I see these days is footage from smartphones or screen recordings. This type of media is often captured using a variable frame rate (VFR), where the frame rate fluctuates to conserve processing power. The problem? Professional editing software is designed to work with a constant frame rate (CFR). When you drop VFR footage into a CFR timeline, you're practically asking for trouble—it's a common cause of that frustrating, unpredictable audio drift.

Always convert VFR footage to a constant frame rate before you start editing. You can use a tool like HandBrake or Adobe Media Encoder for this. It’s an extra step, but it will save you from a world of sync-related frustration down the line.

Setting Up Your Export for Success

Once you're confident the sync is locked in, your export settings are the final checkpoint. The right codec and container are essential for making sure your audio and video stay perfectly married.

For most final deliveries, sticking with a standard codec like H.264 or H.265 (HEVC) inside an MP4 or MOV container is your safest bet. These formats are universally supported and built to maintain sync integrity no matter where the video is played.

Before you export, take a second to confirm your audio settings match your project's specs. This usually means a sample rate of 48 kHz and the AAC audio codec. And the absolute final step? Always, always watch the exported file itself before you send it anywhere. It’s the ultimate confirmation that everything looks and sounds exactly as it should.

If your team is wrestling with tricky delivery specs or needs a more efficient way to gather feedback on final versions, a collaborative video review platform can make a huge difference. Feel free to contact our team at PlayPause to see how we can help simplify your post-production workflow.

Questions That Always Come Up

Even after you've done this a thousand times, certain questions still pop up on nearly every project. Let's tackle some of the most common snags editors run into when trying to get audio and video to play nice.

What if I Forgot to Use a Clapper or Slate?

Don't panic—it happens to the best of us. If you forgot the slate, you can still get a perfect sync. Just look for any other sharp, distinct sound that's visible in the shot.

Some great alternatives include:

A clear, loud hand clap on camera.

A door slamming shut.

Even a percussive consonant like a hard "P" or "T" sound from the speaker.

Zoom all the way in on your NLE’s timeline until you can see the individual audio waveforms. Find that sharp peak on both your camera audio and your external recorder, line them up perfectly, and you're back in business. It takes a bit more finesse, but it's a lifesaver.

My Audio and Video Fall Out of Sync Over Time. What Gives?

That's called audio drift, and it's a classic problem with long recordings. It happens because the internal time-keeping crystals in your camera and audio recorder aren't exactly the same. They're ticking at ever-so-slightly different speeds.

Even a tiny difference, say 0.1%, is enough to cause a noticeable delay after 10 or 20 minutes of continuous recording. It can also be caused by a mismatch in audio sample rates, like recording at 44.1kHz on one device and 48kHz on another. This is precisely why using timecode is the gold standard, but keeping your takes shorter is a solid workaround.

Why Won't My Software's Auto-Sync Feature Work?

Nine times out of ten, when an automated sync fails, it's because of bad reference audio from the camera. The software is trying to match the waveform from your camera's microphone to the one from your dedicated audio recorder. If that camera audio (often called "scratch audio") is too noisy, too quiet, or wasn't even recorded, the software has nothing to lock onto.

Pro Tip: The single best thing you can do to prevent auto-sync failure is to ensure your camera is recording the cleanest possible scratch audio on set. A few seconds spent checking levels before you roll will save you hours of manual syncing later.

Sometimes, the failure happens even with decent scratch audio. This can occur if the sound sources are just too different—for example, trying to match a crisp, clean lavalier mic with a distant, echoey on-camera mic can confuse the algorithm.